Table of Content

- Understanding Generative AI in the Enterprise

- The Unique Security Risks of Generative AI

- Real-World Examples of Generative AI Security Incidents

- AI Risk Management Frameworks for Enterprises

- Building a Secure Generative AI Environment

- Regulatory Compliance and AI Governance

- Leveraging Cybersecurity AI Tools and Best Practices

- The Future of Generative AI Security: Trends and Recommendations

- How Albiorix Technology Can Helps You Build and Secure AI

- Conclusion

Summary: Generative AI security risks include critical threats like data leakage, prompt injection, model poisoning, and insecure output handling. These systems can inadvertently expose sensitive information, be manipulated into generating malicious code, or spread misinformation.

In fact:

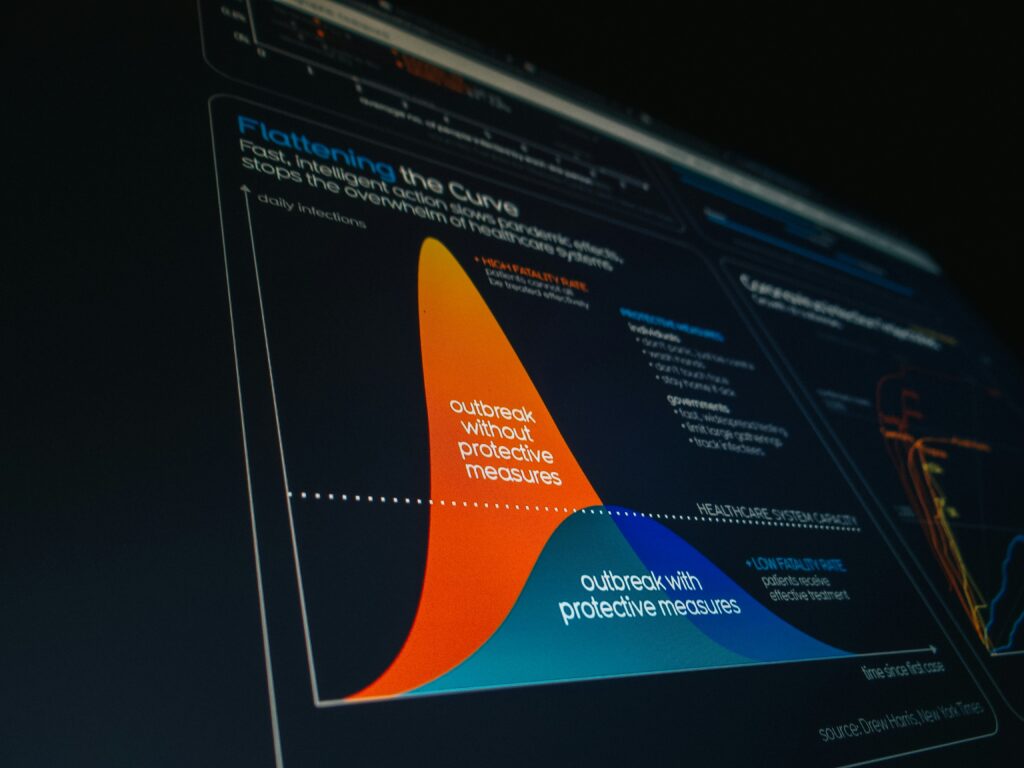

77% of enterprises reported an AI-related security incident in 2024. The attack surface is no longer theoretical it’s embedded in your code pipeline, your chatbots, your autonomous agents, and your inference infrastructure.

While building your core architecture as outlined in our Generative AI development guide, you must account for these non-deterministic security variables.

Here’s every risk that matters, and how to close the gaps:

- 77% of businesses reported an AI-related security incident in 2024

- $4.88M average cost per AI-involved data breach (IBM, 2024)

- 2,000% increase in AI-specific CVEs since 2022 (NIST)

- 92% of CISOs are concerned about AI agent security (Darktrace, 2026)

Generative AI is transforming enterprises by creating content like text, images, and code. However, this innovation brings unique security risks.

Enterprises are rapidly adopting generative AI, often outpacing the development of security measures. This can lead to vulnerabilities.

Deepfakes, a product of generative AI, pose threats through misinformation and fraud. They can be difficult to detect.

Adversarial attacks exploit AI models by subtly altering inputs, misleading the AI. This can have serious consequences.

Data poisoning is another risk, where malicious data corrupts AI training sets. This compromises AI outputs.

Intellectual property theft is a concern, as generative AI can replicate proprietary content. This threatens business assets.

AI systems may inadvertently leak sensitive data if not properly secured. This can lead to data breaches.

Understanding and managing these risks is crucial for enterprises to safely harness the power of generative AI.

Understanding Generative AI in the Enterprise

Generative AI is revolutionizing the enterprise landscape. This technology automates the creation of content, providing efficiency and innovation.

For enterprises, generative AI can enhance product development, marketing, and customer service. The potential applications are vast and diverse.

However, adopting generative AI requires understanding its functionalities and limitations. Enterprises must identify how it integrates into existing processes.

Key characteristics of generative AI in enterprise contexts include:

- Content Automation: Speeds up production of text, images, and more.

- Enhanced Creativity: Assists in brainstorming and design processes.

- Scalability: Improves operational efficiency across large operations.

Understanding AI models’ workings is crucial for mitigating risks. The black-box nature makes transparency challenging.

Adoption should align with strategic goals and consider security implications. Proper governance ensures responsible use of AI technologies.

The Unique Security Risks of Generative AI

Generative AI introduces distinct security concerns. Its capabilities can be a double-edged sword for enterprises.

These risks are often unprecedented due to AI’s complexity. Traditional security measures may not fully address AI-specific vulnerabilities.

Key security risks associated with generative AI include:

- Data Leakage: Sensitive information might inadvertently be exposed through AI outputs.

- Data Poisoning: Malicious actors can manipulate datasets, impacting AI reliability.

- Deepfakes and Misinformation: AI can produce deceptive content that threatens enterprise integrity.

- Intellectual Property Concerns: AI’s ability to mimic proprietary assets is a legal and ethical minefield.

Moreover, AI models can suffer from adversarial attacks. Slight input alterations may lead to vastly different, unintended outputs.

Prompt injection attacks represent another risk. Malicious prompts could alter an AI’s normal functioning.

Lastly, over-reliance on AI can lead to automation bias. This bias might skew decision-making processes in vital operations.

Understanding these risks is vital. Enterprises must balance AI innovation with robust security frameworks.

Data Leakage and Exposure

Data leakage represents a severe risk in AI environments. AI models might inadvertently reveal sensitive data.

For enterprises, this exposure can mean unauthorized access to proprietary information. Malicious actors can exploit these leaks for various purposes.

Some potential causes of data leakage include:

- Improper Data Handling: Inadequate practices lead to accidental exposure.

- Model Overfitting: AI becomes too tailored to specific data, inadvertently replicating sensitive information.

- Insecure APIs: Poorly secured interfaces increase data vulnerability.

Mitigating data exposure starts with robust data governance. Enterprises should ensure that only authorized personnel access critical data.

Implementing stringent access controls is another effective measure. Regular monitoring of data access patterns helps detect anomalies quickly.

Furthermore, auditing AI outputs for unexpected information can help catch leaks early. It is essential to address these vulnerabilities proactively.

Data Poisoning and Model Manipulation

Data poisoning undermines AI integrity. This risk involves inserting harmful data into training datasets.

A compromised dataset can distort AI predictions. Enterprise decision-making suffers when datasets lack reliability.

Common sources of data poisoning include:

- Deliberate Attacks: Malicious entities inject skewed data intentionally.

- Poor Data Collection: Faulty data-gathering methods can inadvertently introduce bias.

- Negligent Data Curation: Failing to clean datasets properly leads to vulnerabilities.

To combat data poisoning, rigorous data validation is critical. Enterprises should prioritize curating and cleansing datasets thoroughly.

Employing anomaly detection can further safeguard model integrity. This detects unusual data patterns that might indicate poisoning.

Additionally, implementing secure data channels prevents unauthorized dataset alterations. Constant vigilance over data inputs maintains model reliability.

Deepfakes, Synthetic Content, and Misinformation

Deepfakes showcase AI’s potential for misuse. The ease of creating synthetic content poses misinformation threats.

Enterprises face challenges from AI-generated misinformation. Such content can damage reputations and mislead stakeholders.

Key aspects of deepfake risks include:

- Brand Manipulation: Imitated content can falsely represent a company’s image.

- Fraudulent Activities: Realistic-looking content facilitates scams and fraud.

- Eroded Trust: Stakeholders lose confidence when authenticity becomes uncertain.

Preventing misuse involves deploying detection technologies. Enterprises should adopt AI tools capable of identifying fake content.

Educating employees about deepfakes enhances vigilance. Awareness enables quicker responses to potential threats.

Collaboration with tech partners is crucial for effective AI oversight. Shared resources aid in developing robust detection frameworks.

Intellectual Property and Confidentiality Risks

Intellectual property theft is a notable AI risk. Generative AI can replicate valuable business information.

This replication threatens ownership rights and confidentiality. Enterprises must protect their unique assets from unauthorized reproduction.

Several factors contribute to these risks:

- Model Outputs: AI inadvertently discloses proprietary patterns or content.

- Reverse Engineering: Malicious actors analyze AI models to steal design secrets.

- Uncontrolled Access: Inappropriate permissions lead to confidential data misuse.

Intellectual property protection starts with legal safeguards. Enterprises should enforce strict contracts and non-disclosure agreements.

Implementing technological barriers is equally important. Techniques like watermarking can trace unauthorized usage.

Confidentiality protocols further restrict access to sensitive AI-generated content. These measures reinforce intellectual property defenses.

Adversarial Attacks and Model Vulnerabilities

Adversarial attacks exploit AI model weaknesses. Subtle input changes can dramatically mislead AI outputs.

These attacks highlight the fragility of AI systems. They can compromise decision-making processes within enterprises.

Key vulnerabilities include:

- Input Sensitivity: Models fail when encountering unexpected input variations.

- Model Complexity: Sophisticated AI architectures may hide numerous exploitable weaknesses.

- Insufficient Testing: Lack of rigorous scenario testing leaves models exposed.

Enterprises should reinforce AI resilience against these attacks. This involves employing defensive mechanisms and adversarial testing methodologies.

Model robustness assessments enhance security. These evaluate AI’s responses to various adversarial input techniques.

Moreover, implementing secure AI frameworks ensures ongoing protection. Continuous evaluation of AI model security can prevent breaches.

Prompt Injection and Output Manipulation

Prompt injection is a subtle, yet significant risk. Malicious prompts can manipulate AI outputs, affecting enterprise operations.

Enterprises using AI to generate content face risks of output manipulation. Such manipulation can lead to misinformation and operational issues.

Risks include:

- False Information: Corrupted prompts produce misleading data.

- Operational Disruptions: Altered outputs impact system functionality.

- Compromised Decision-Making: Enterprises rely on altered data, affecting strategic plans.

Implementing prompt filtering helps safeguard against manipulations. These filters scrutinize inputs to prevent harmful prompts.

Additionally, regular testing of prompt impact on AI outputs aids in mitigation. It ensures continual output integrity.

Furthermore, applying layered security measures reduces potential manipulations. This includes access controls for prompt submissions.

Over-Reliance and Automation Bias

Over-reliance on AI can cause automation bias. This skew results from excessive trust in AI outputs over human judgment.

Automation bias may lead to flawed business decisions. Enterprises must remain critical of AI-driven insights to ensure balanced outcomes.

Some contributing factors are:

- Blind Trust: Acceptance of AI outputs without question.

- Reduced Human Oversight: Insufficient human checks on AI-generated data.

- Inadequate Training: Lack of employee awareness about automation limits.

Mitigating bias requires promoting human-AI collaboration. Enterprises should train employees to assess AI outputs critically.

Balanced oversight involves regular evaluations of AI-driven processes. This maintains a healthy equilibrium between machine and human assessments.

Furthermore, diversifying decision processes helps reduce automation’s influence. It ensures multiple perspectives guide enterprise strategies.

Real-World Examples of Generative AI Security Incidents

Generative AI security incidents serve as cautionary tales. These real-world examples highlight potential pitfalls enterprises might face.

A notable case involved a leading tech company. They faced data leakage when confidential information surfaced from an AI-driven application. This incident underscored the risks of inadequate data controls.

Another significant occurrence was the use of deepfakes during political campaigns. Fake content was created to mislead voters and tarnish reputations. This situation showcased the societal impact of unchecked AI misuse.

Furthermore, some enterprises experienced adversarial attacks. Hackers manipulated input data to alter AI decision-making processes, resulting in financial setbacks.

Common themes in these incidents include:

- Insufficient data protection leading to breaches.

- Exploitation of AI model vulnerabilities.

- Misinformation affecting public perception.

These examples emphasize the necessity for robust AI security strategies. Learning from past incidents helps enterprises fortify their systems against similar threats.

AI Risk Management Frameworks for Enterprises

Incorporating AI into enterprise operations presents unique challenges. AI risk management frameworks can help navigate these challenges effectively.

Establishing a structured approach is critical. Enterprises must first identify potential risks associated with AI deployments. This foundation allows for informed decision-making.

Risk assessment involves evaluating AI model vulnerabilities and data integrity. Regular audits are essential to ensure ongoing security compliance. These audits uncover hidden weaknesses in AI systems.

Enterprises should implement a framework that includes:

- Identification of AI threats and vulnerabilities.

- Assessment and prioritization of risks.

- Development of mitigation strategies.

- Continuous monitoring and reassessment.

Collaborating with cybersecurity experts can enhance risk management efforts. Their expertise provides additional insights into potential threats and defenses.

Additionally, integrating AI governance policies within the enterprise structure ensures compliance with regulations. Well-defined governance policies safeguard both data and operational processes. An effective framework supports AI innovation while minimizing risks.

Building a Secure Generative AI Environment

Creating a secure environment for generative AI is vital. Enterprises need to integrate robust security measures to protect AI systems.

Start by identifying risks in AI deployments. This awareness guides the implementation of security protocols. Consider both internal and external threats during evaluation.

Limiting access to sensitive AI systems is crucial. Implement strict access controls to prevent unauthorized usage. These controls reduce the likelihood of data breaches.

Security measures for AI systems include:

- Encryption of sensitive data.

- Regular system updates and patches.

- Multi-factor authentication for access.

AI models should be regularly tested for vulnerabilities. Use adversarial testing to evaluate model resilience against attacks. This helps identify potential weaknesses early.

Monitoring AI activities is essential. Deploy tools that track system performance and alert anomalies. Continuous monitoring enables swift detection of suspicious activities.

To secure AI, enterprises must develop comprehensive risk mitigation plans. These plans should be adaptable to emerging threats. A dynamic approach ensures long-term security.

Finally, embed security into AI development processes. Engage security teams from the start to foster a culture of safety. Security should be considered at every stage of AI deployment.

Think Your AI System May Already Be at Risk?

Get a Free AI Security Review from AI Security Experts at Albiorix Technology.

Check With Us

Data Governance and Access Controls

Data governance is a pillar of AI security. It involves setting policies for data management and usage.

Effective governance ensures data integrity and compliance. Enterprises must establish clear guidelines to regulate data access.

Access controls are vital for protecting sensitive information. Restrict data access to authorized personnel only. This minimizes the risk of unauthorized data exposure.

Key components of data governance and access controls include:

- Role-based access management.

- Data encryption at rest and in transit.

- Regular audits of data access logs.

Conduct regular audits to spot potential access violations. These audits validate compliance with data governance policies. They also help ensure data safety.

Implementing robust data encryption safeguards sensitive information. Encryption acts as a barrier against unauthorized access. It is an essential part of any data protection strategy.

Monitoring, Auditing, and Incident Response

Ongoing monitoring is crucial for maintaining AI security. It allows for real-time detection of anomalies and threats.

Deploy advanced monitoring tools to track AI system activities. These tools should provide comprehensive visibility into operations. Such visibility is essential for recognizing unusual behaviors.

Focus areas for monitoring, auditing, and incident response include:

- Real-time alerts for suspicious activities.

- Regular audits for compliance checks.

- Incident response plans for effective threat management.

Auditing helps identify non-compliance issues. Regular audits verify adherence to security and governance policies. They highlight areas needing improvement.

Develop an incident response plan to address security breaches. This plan should outline clear steps and responsibilities. A rapid response can minimize the impact of security incidents.

Additionally, ensure the plan is regularly updated. As threats evolve, response strategies must adapt. Stay prepared for emerging challenges.

Employee Training and Security Awareness

Employees play a significant role in AI security. Regular training on security best practices is essential for minimizing human errors.

Training programs should focus on security awareness and compliance. They equip employees with the necessary skills to recognize threats. Well-informed employees act as the first line of defense.

Components of effective employee training include:

- Regular security workshops and seminars.

- Updates on emerging threats and security practices.

- Simulated security breach exercises.

Host workshops to educate staff on AI security risks. These sessions promote a culture of vigilance and responsibility. They also encourage proactive threat reporting.

Update training materials to reflect the latest security developments. Keeping information current ensures effective learning. Employees must stay informed about potential AI threats.

Finally, conduct simulated security exercises. These drills test the readiness of staff to handle real threats. They also identify areas requiring further training.

Regulatory Compliance and AI Governance

AI governance is crucial for ensuring ethical AI usage. It encompasses policy creation and adherence to regulations.

Enterprises must navigate complex compliance frameworks. For example, GDPR mandates strict data protection measures.

Non-compliance can lead to significant penalties. This makes understanding regulatory requirements essential for AI deployments.

Key elements of AI governance include:

- Compliance with data protection laws.

- Transparent AI development and deployment.

- Regular assessments of ethical implications.

A transparent approach builds trust among stakeholders. It involves clear communication about AI’s role and decision processes.

Continuous assessment of AI systems ensures compliance. This includes both internal reviews and external audits. Regular evaluations help align AI practices with legal standards.

Finally, engage with regulatory bodies and industry experts. Their insights aid in maintaining compliance amidst evolving regulations. They also provide guidance on future governance strategies.

Leveraging Cybersecurity AI Tools and Best Practices

The integration of AI in cybersecurity fortifies defenses. It provides advanced tools for identifying and mitigating threats.

Generative AI offers predictive insights. These insights help preempt security incidents before they occur.

Benefits of adopting cybersecurity AI tools include:

- Real-time threat detection and response.

- Enhanced analysis of suspicious activities.

- Automated incident management processes.

Security teams can utilize AI-driven analytics. Such analytics enable quicker decision-making and improved risk assessments.

Implementing best practices enhances AI cybersecurity strategies. Regularly update and patch AI systems to close security gaps. Promote collaboration between AI vendors and in-house teams for shared expertise.

Regular training for IT staff on AI trends is crucial. Stay informed about emerging threats and defensive tactics. This ensures a robust defense against evolving adversaries.

The Future of Generative AI Security: Trends and Recommendations

The field of generative AI security is rapidly evolving. New trends are shaping how enterprises manage AI risks. Staying informed is key to maintaining robust security.

Anticipated trends include:

- Increased use of AI for self-defending systems.

- Advanced algorithms for detecting synthetic fraud.

- Greater emphasis on AI ethics and regulations.

AI governance is becoming crucial for enterprises. Aligning AI practices with ethical standards will help build trust. Enterprises must anticipate regulatory changes in AI deployment.

Collaboration will drive future security innovations. Industries are likely to pool resources and share intelligence. This cooperation will enhance resilience against AI threats. Keeping up with these trends can ensure a secure future.

How Albiorix Technology Can Helps You Build and Secure AI

At Albiorix Technology, we design, develop, and deploy Generative AI solutions for businesses of every size; from early-stage startups to large enterprises. Whether you’re integrating LLMs into your product, building agentic AI workflows, or developing custom AI applications, our team of AI software developers build with security as a first principle, not an afterthought.

We understand that the same AI capabilities that drive business value generative models, autonomous agents, RAG pipelines, also introduce the risks this guide covers.

That’s why every AI solution we deliver is architected with prompt-level safeguards, data governance controls, and inference security built in from day one.

Conclusion

Generative AI offers immense opportunities for innovation. However, enterprises must tread carefully to manage associated security risks. Balancing these aspects is crucial for sustainable growth.

Security measures should evolve alongside AI advancements. Enterprises need to integrate AI risk management into their core strategies. This ensures robust protection against emerging threats.

Fostering a culture of security can drive enterprise success. Continuous education and proactive governance are key. By doing so, enterprises can maximize AI potential while safeguarding against vulnerabilities.

Connect with Our Experts!

FAQs – Generative AI Security Risks in 2026

Biggest risk in 2026 is prompt injection, data leakage/PII exposure, insecure AI-generated code, model poisoning, agentic AI misuse, inference attacks, supply chain threats, shadow AI, hallucinations, and compliance failures.

Prompt injection embeds malicious instructions into inputs to override AI safeguards ranked #1 by OWASP because it can trigger data leaks or unauthorized actions, especially in agentic systems.

Unlike passive LLMs, agentic AI can take real actions (APIs, code, data changes), so attackers can exploit it to perform tasks with its granted permissions.

It focuses on securing the runtime layer where models handle live queries, protecting against prompt attacks, model theft, data leaks, and API abuse.

Use layered defenses across governance, applications, data, and infrastructure like AI policies, prompt filtering, access controls, DLP, and secure APIs.

Client Showcase: Trusted by Industry Leaders

Explore the illustrious collaborations that define us. Our client showcase highlights the trusted partnerships we've forged with leading brands. Through innovation and dedication, we've empowered our clients to reach new heights. Discover the success stories that shape our journey and envision how we can elevate your business too.